You probably swipe and tap your phone thousands of times a day. Your phone dutifully records every single one of those interactions. But until now, it didn't have a clue how much effort you were actually putting in. The physical strain? A complete mystery.

Enter Log2Motion, a new AI system from researchers at Aalto University and Leipzig University that's about to change how we think about smartphone design. Essentially, it takes your mundane tap logs and turns them into a full-body motion simulation. Because apparently, that's where we are now.

The Hidden Workout in Your Pocket

Traditional phone analytics are a bit like a sports tracker that only tells you where you ran, not how hard you ran. Designers could see if you tapped a button, but had no idea if that tap felt like a tiny feat of engineering or a relaxing glide. This meant comfort was largely a guessing game.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxAntti Oulasvirta, a professor at Aalto University, points out this is the first tool that helps designers quickly figure out how physically exhausting a mobile interface might be. No more just knowing where a finger touched; now, we're talking about whether it felt like a tiny workout.

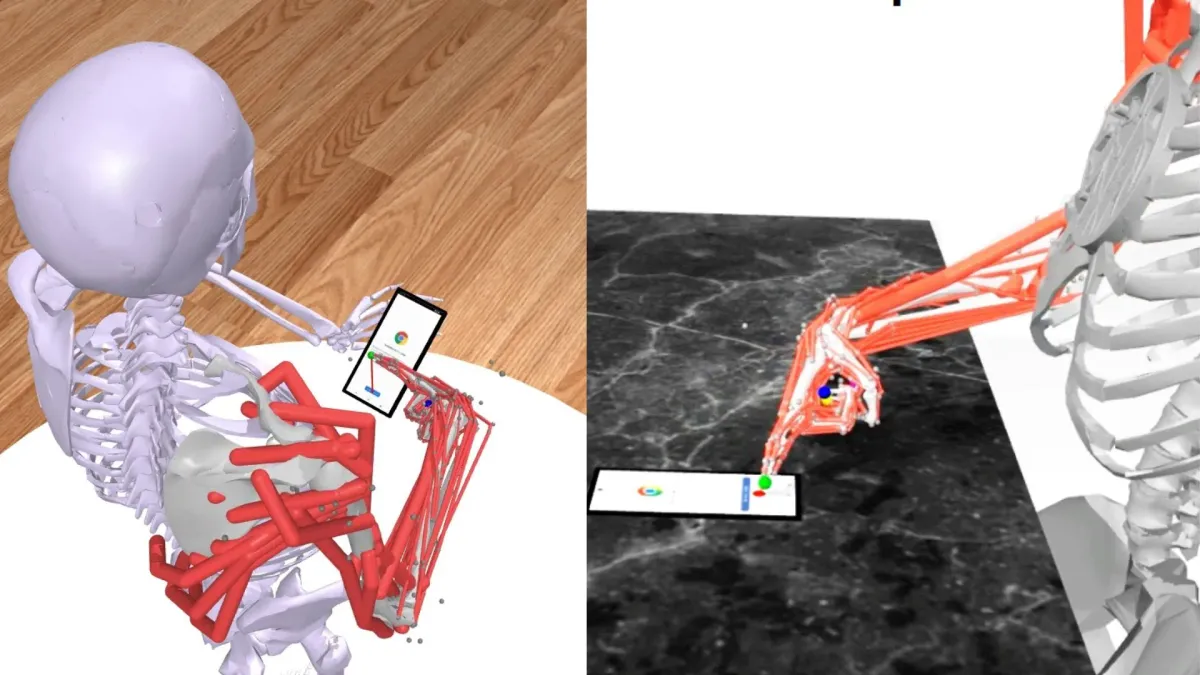

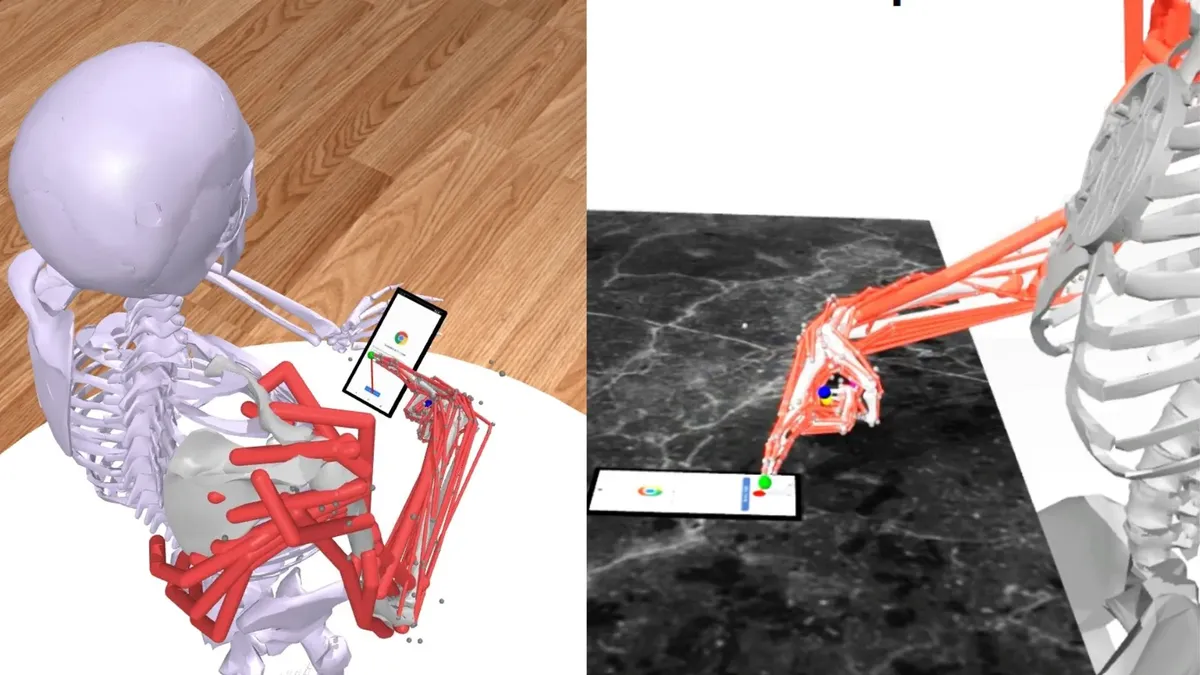

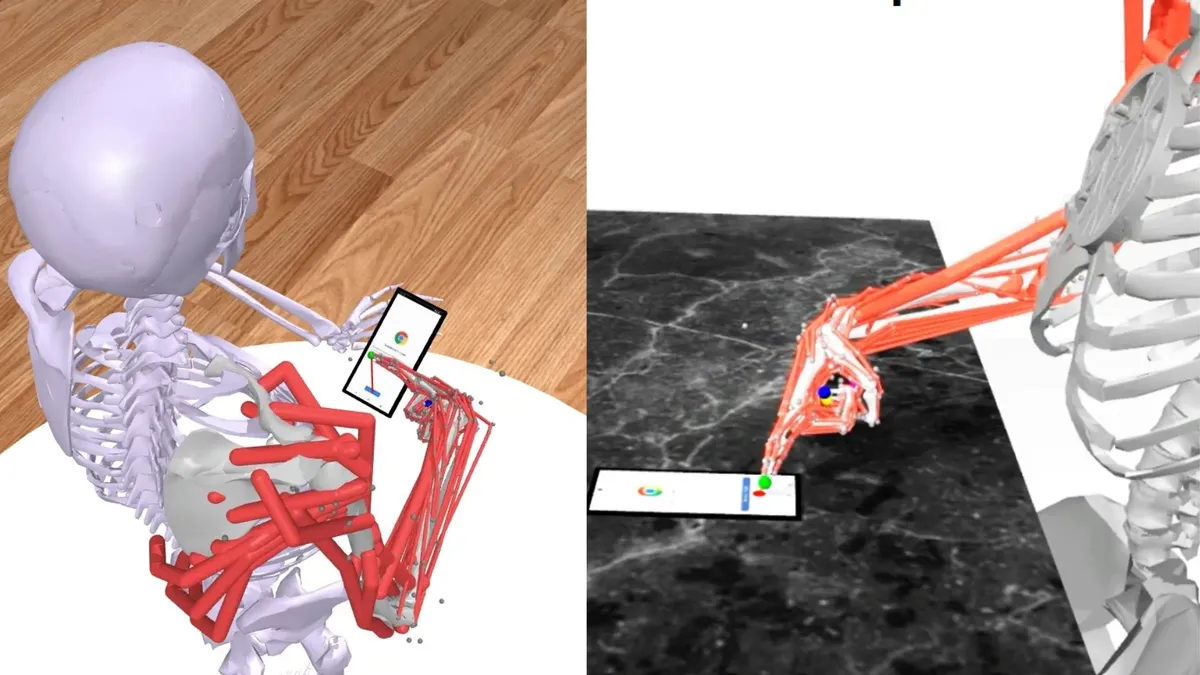

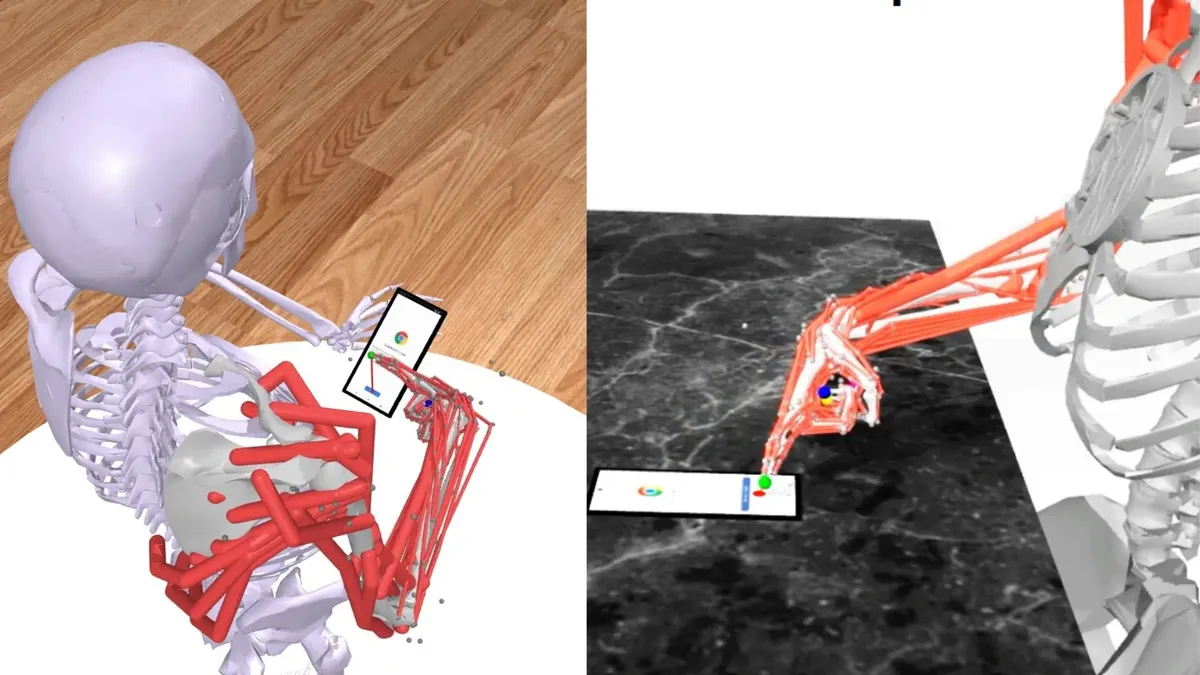

To pull this off, the team built Log2Motion around a musculoskeletal model. Think of it as a digital human skeleton and muscle system, but specifically trained to mimic how your finger moves across a screen. Then, using some clever reinforcement learning and a physics engine, it interacts with actual mobile apps in real-time. It's like a tiny, invisible stunt double for your finger, tirelessly testing every swipe.

Designing for the Human Hand (Finally)

The system translates your raw touch data into detailed motion sequences, estimating everything from speed and precision to the sheer physical effort involved. And the validation? They used motion capture data from actual humans. Because nothing says scientific rigor like strapping sensors to people while they scroll TikTok.

The findings are pretty fascinating. Turns out, up-down and down-up swipes demand more effort. Those tiny icons? The ones tucked into the corners of your display? They're basically tiny strength tests. Your thumb is getting a workout you didn't even know it signed up for.

These insights aren't just for curious scientists. They could fundamentally shift how mobile interfaces are designed. Instead of just focusing on speed and aesthetics, developers can now factor in physical strain. This could also be a game-changer for accessibility, letting designers test how an interface feels for users with tremors, reduced strength, or prosthetics. It can even simulate common, awkward scenarios, like one-handed scrolling while lounging on the couch. Because who hasn't been there?

As our phones become extensions of our hands (and, let's be honest, our brains), designing interfaces that feel as good as they look isn't just a nicety. It's a necessity. And now, we have an AI that knows exactly how much effort you're putting into that endless scroll.