Generative AI is hungry. Training a model to create 1,000 images produces as much carbon as driving a car four miles. As these systems grow more capable, their energy demand is becoming the real bottleneck—not just for tech companies, but for anyone who cares about the electricity bill or the grid.

Researchers at Shanghai Jiao Tong University and Tsinghua University just demonstrated something that might help: an optical chip called LightGen that processes image and video generation tasks more than 100 times faster than Nvidia's A100 GPU, while using a fraction of the energy.

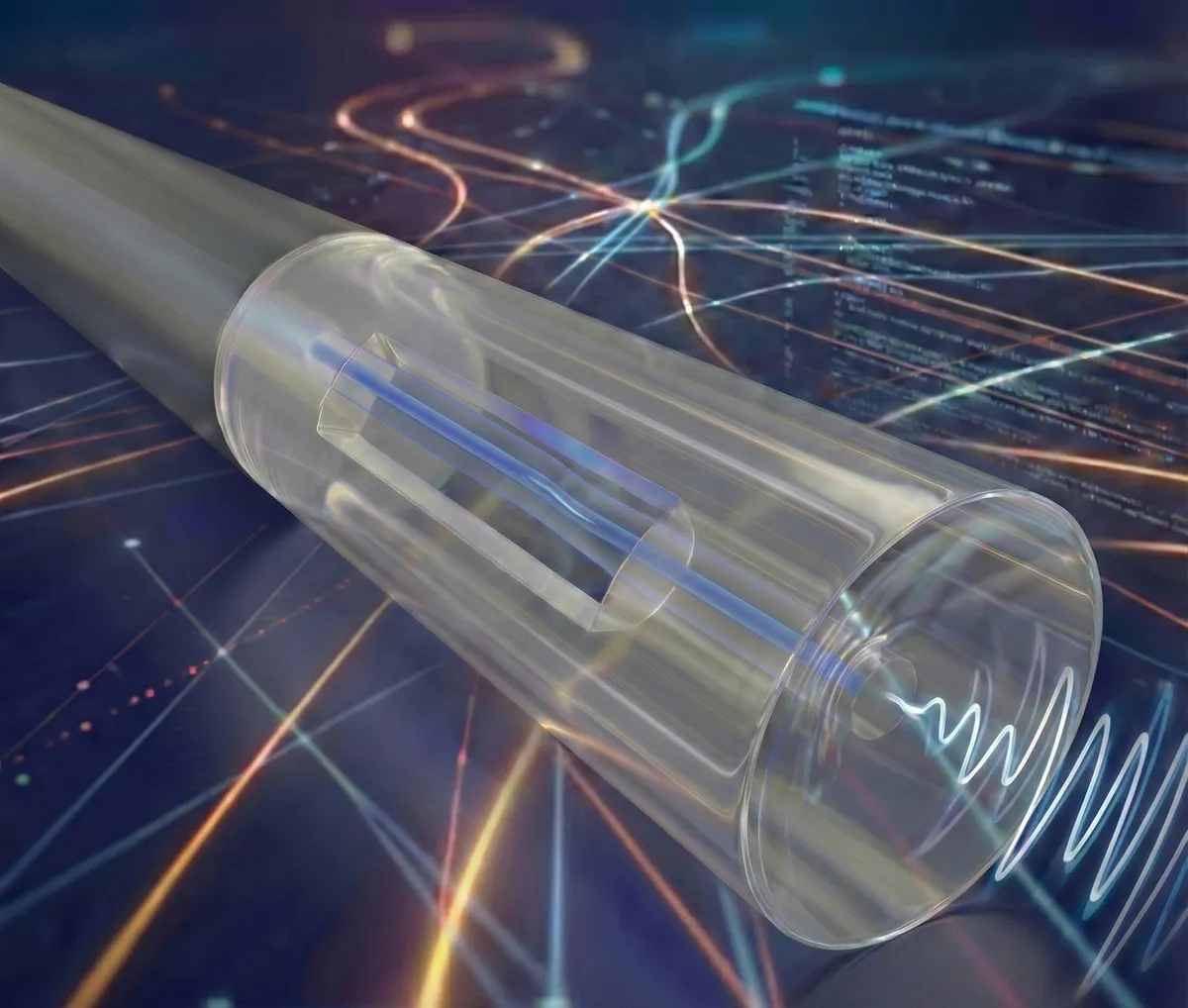

The insight is almost poetic. Instead of pushing electrons through silicon, LightGen uses light. Photonic computing isn't new in theory, but the practical challenge has always been density—fitting enough processing power into something small enough to be useful. Previous optical chips maxed out at a few thousand artificial neurons. LightGen cracks this by stacking more than two million neurons onto a device the size of a postage stamp using 3D packaging.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxHow it actually works

Generative AI models compress messy, high-dimensional data (like the infinite variations of a dog's face) into simpler, workable representations. LightGen does this entirely with light. An image passes through an optical encoder made of metasurfaces—ultra-thin structures that bend and shape light like a microscopic lens. This optical filtering naturally strips away unnecessary detail, condensing the information into what researchers call an "optical latent space," stored in an array of optical fibers.

The team tested it on real tasks: generating high-resolution animal images, converting photos into different artistic styles, turning 2D images into 3D models. In every test, LightGen's speed and efficiency beat the A100 by two full orders of magnitude.

There's a catch. The chip still needs bulky lasers and spatial light modulators to generate input signals—it's not something you're fitting into a laptop tomorrow. The technology is still in the lab, and miniaturizing those components will take time.

But the direction is clear. As AI models demand more power and the electricity grid tightens, optical processors could shift how we think about computing at scale. The physics is working. Now it's just engineering.