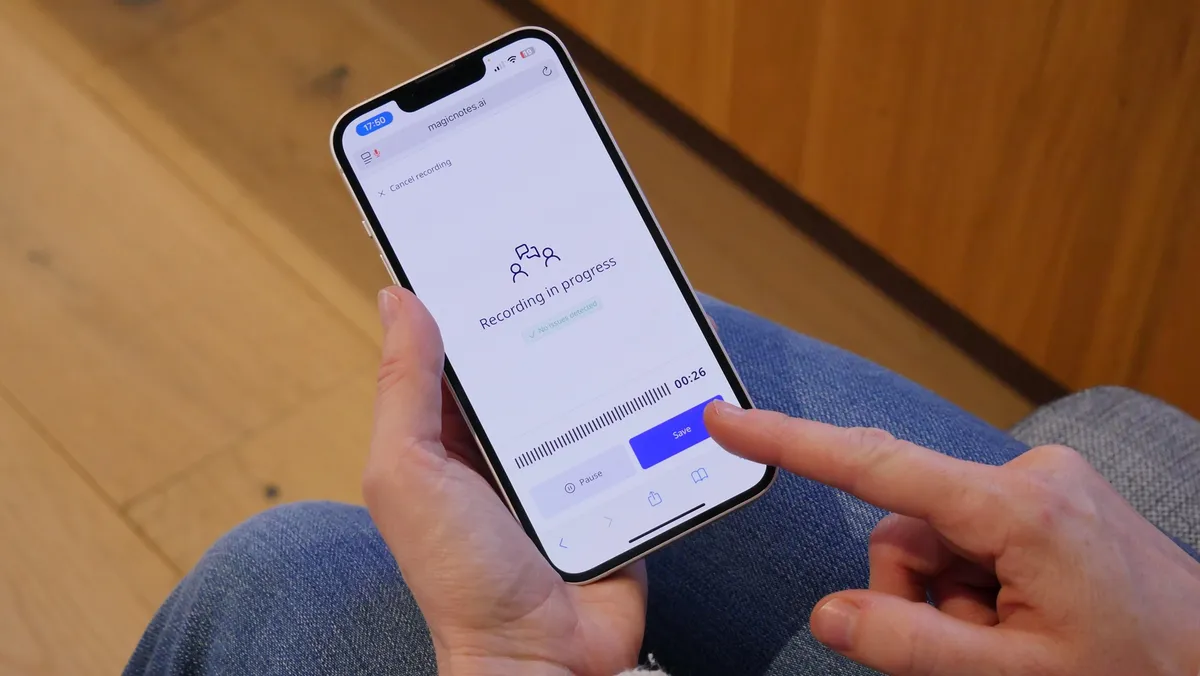

When social workers spend their days typing up notes instead of talking to clients, something has gone wrong. Beam's Magic Notes, an AI tool that transcribes and summarizes meeting notes, is now being used by 65,000 practitioners across 200 organizations in the UK — and a new public consultation suggests most people think that's a good thing.

The tool emerged from a simple problem: Beam's own caseworkers were drowning in administrative work. Since launching in 2023, Magic Notes has spread across local authorities, hospitals, and social care services. But with AI in public services drawing increasing scrutiny, Beam partnered with Nesta to ask the public what they actually think about it.

Between September and October 2025, 137 UK adults — including people who use social care services — spent time in small deliberation groups learning about Magic Notes and weighing its benefits against its risks. The results were striking: 83% felt positive about social workers using the tool, and 86% believed it would benefit social care overall.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxWhat People Actually Value

Participants understood the appeal immediately. They saw how the tool could give social workers back the time they need to actually listen to clients, improve the quality of case notes, and ease the burnout that's pushing experienced practitioners out of the sector. These aren't abstract benefits — they're the difference between a social worker who has five minutes to spend with you and one who has fifteen.

But the public wasn't naive about it. When asked about risks, participants raised legitimate concerns: Could the AI get things wrong? What happens to the data? Might social workers start trusting the tool more than their own judgment? The answer to that last worry was clear: 74% said the benefits outweighed the risks, but only if social workers reviewed and approved every summary the AI generated. In other words, the technology should support human judgment, not replace it.

What's striking is how the consultation itself shifted opinion. People who started skeptical became more confident once they understood how the safeguards actually worked. This matters because it suggests the problem isn't AI in social care — it's the lack of honest conversation about how it's being used.

The consultation also revealed something darker: only 13% of participants said they were satisfied with how social care works today. The system is under such strain that people are willing to embrace new tools, not out of enthusiasm for technology, but out of desperation for something that works better. That's the real story here.

Kathy Peach, director of the Centre for Collective Intelligence at Nesta, framed it bluntly: "The government's AI Adoption plan is bound to fail unless there's public support for AI in public services." Public trust in technology doesn't happen by accident. It happens when organizations do the unglamorous work of asking people what they think, listening to their concerns, and building safeguards that actually address them.

Beam's approach — opening Magic Notes to scrutiny rather than quietly rolling it out — suggests a different model for how AI gets deployed in public services. Not "we built this, trust us," but "we built this, here's how it works, what do you think could go wrong."

The next question isn't whether more organizations will adopt AI tools. It's whether more will take the time to ask the public first.