Data centers are consuming energy at four times the rate of other industries, mostly because AI systems keep getting bigger and hungrier. A team of German researchers thinks they've found a way to flip that equation: stop making computers that process everything as 1s and 0s, and start building ones that work more like actual brains.

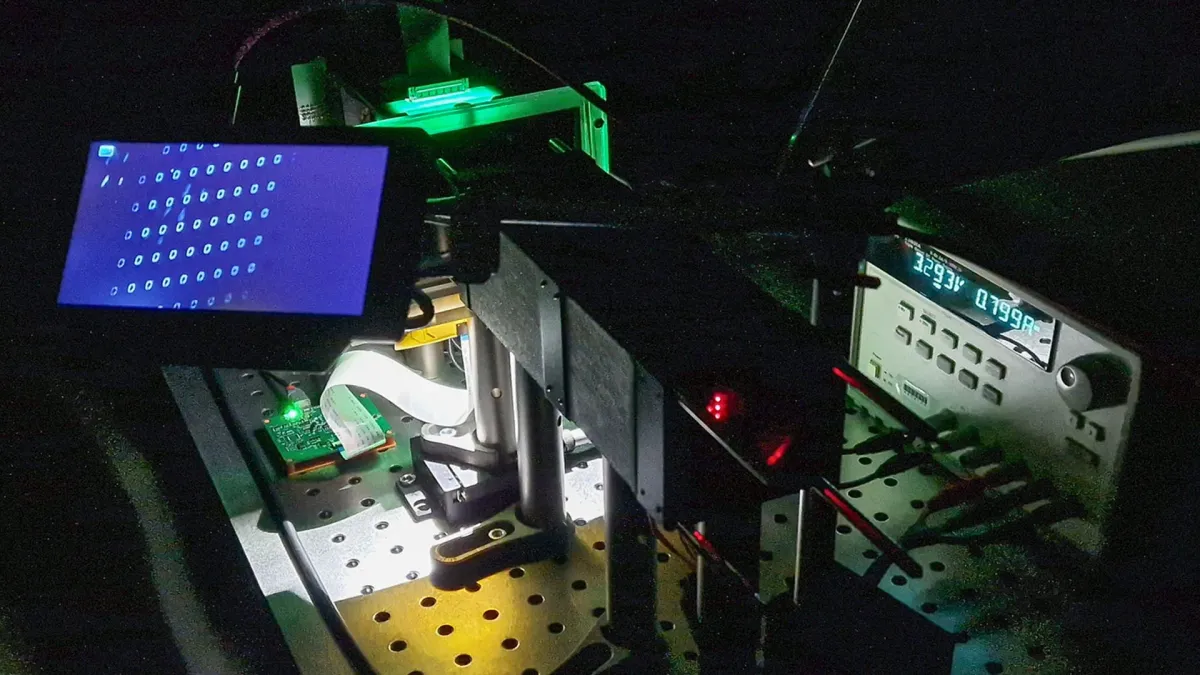

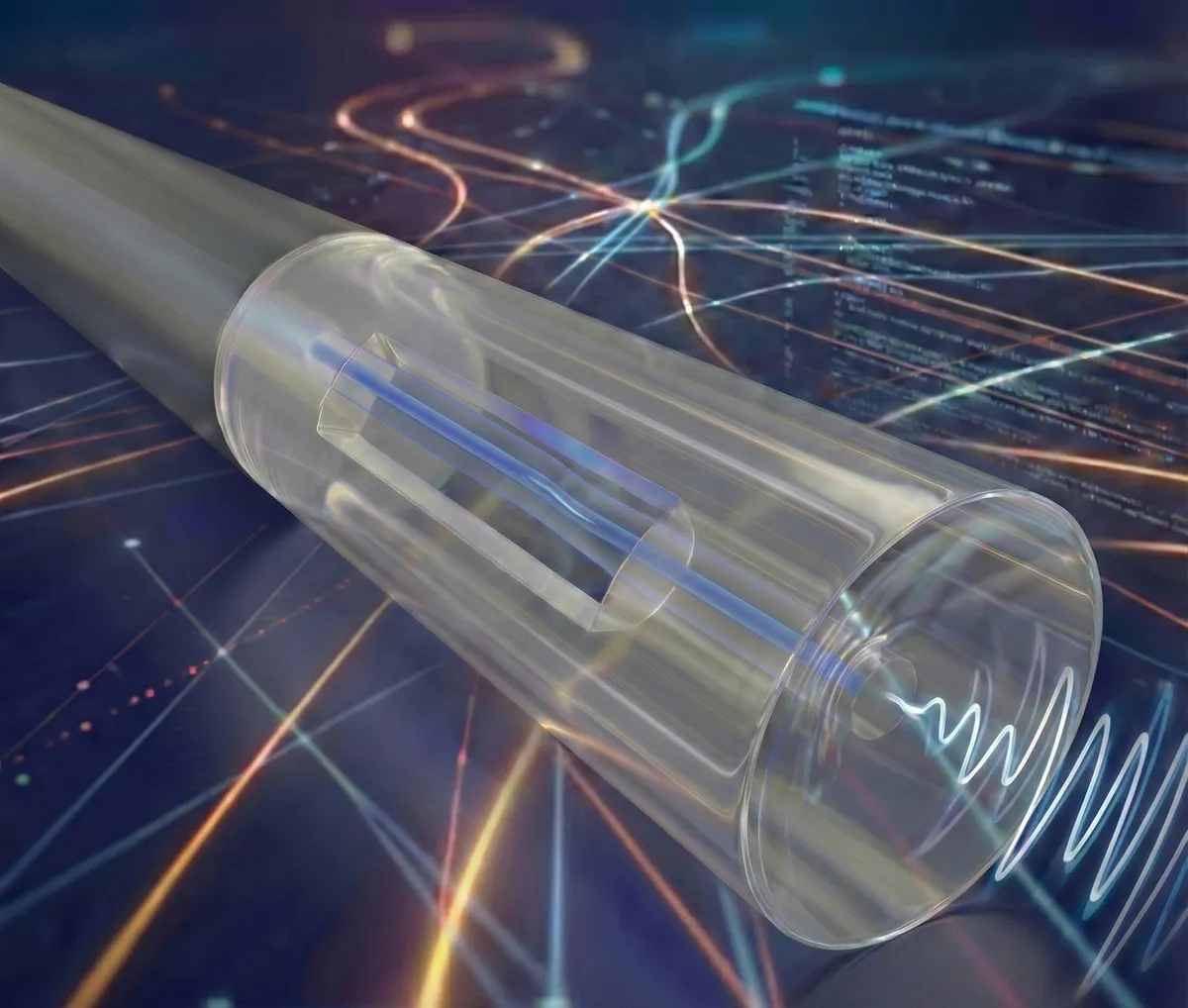

The project, called BRIGHT, swaps out traditional silicon transistors for tiny light-emitting diodes—LEDs—as the computational building blocks. It sounds like a small change. It's not. By letting light do the heavy lifting instead of electricity, the system can process information in parallel, the way neurons do, while consuming a fraction of the energy that conventional AI hardware demands.

"The combination of light, microelectronics and neuromorphic thinking opens up a path to powerful AI that consumes significantly less energy," said Angela Ittel, president of Technische Universität Braunschweig, one of the lead institutions.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxHow Brain-Inspired Computing Actually Works

Traditional computers break every piece of data into binary sequences and work through them step by step—a process that's fast but power-hungry at scale. The BRIGHT team is doing something fundamentally different. Instead of simulating neural networks through long chains of digital calculations, they're implementing the network directly in hardware. The LEDs act like neurons, firing in parallel, processing multiple streams of information at once.

The consortium—which includes Leibniz University Hannover, Ostfalia University of Applied Sciences, and Germany's Physikalisch-Technische Bundesanstalt—has secured €15 million ($17.6 million) in funding from Lower Saxony and the Volkswagen Foundation. They're combining silicon-based circuits for logic and control with gallium nitride devices that emit light efficiently, creating a hybrid system that's more than just a proof of concept.

Over the next five years, the team plans to scale up. They want to increase the number of optical connections between LEDs, improve the light-emitting components themselves, and refine how different chip technologies integrate together. The goal is to move from laboratory prototype toward something that could actually power the next generation of AI systems without requiring a power plant to run it.

The timing matters. As AI models grow more sophisticated and data centers multiply, energy consumption has become a real constraint—not just for operating costs, but for the planet. A system that delivers comparable computing power while using a fraction of the electricity is the kind of progress that changes what's possible.