Nvidia is expanding its AI efforts with new chips and data centers. The company unveiled its Vera CPU and the Vera Rubin platform. These are designed to power the next generation of AI systems and large AI data centers.

Nvidia says the new processor and architecture will handle the fast growth of AI tasks. These tasks involve software agents that plan, execute code, and interact with other systems on their own.

Agentic AI Hardware Push

The Vera CPU is made for these new AI tasks. Nvidia claims it is twice as efficient and 50% faster than older rack-scale CPUs. This chip will power AI data centers. These centers train models, run AI agents, and manage large computing clusters across cloud and enterprise systems.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxNvidia says the Vera CPU changes how processors support modern AI. CPUs are now central to coordinating AI tasks across large computing environments, not just supporting GPUs.

Jensen Huang, Nvidia's CEO, noted that Vera arrives at a turning point for AI. He said that as AI becomes "agentic," meaning it can reason and act, the systems that manage this work become more important. Huang added that the CPU is now driving the AI model, not just supporting it.

The Vera processor has 88 custom-designed Olympus CPU cores and high-bandwidth memory. This allows it to manage thousands of AI environments at once. A single rack with 256 Vera CPUs can support over 22,500 AI environments for reinforcement learning or agent testing.

The processor will also work with Nvidia GPUs in the Vera Rubin architecture. Here, CPUs and GPUs share data using high-speed connections. Major cloud companies like Alibaba, Meta, Oracle Cloud Infrastructure, Dell Technologies, and Lenovo plan to use systems built on this new processor.

AI Factories Unveiled

The Vera CPU is part of Nvidia's larger Vera Rubin platform. Nvidia calls this a new generation of AI infrastructure. It is designed to work as one supercomputer across many hardware racks.

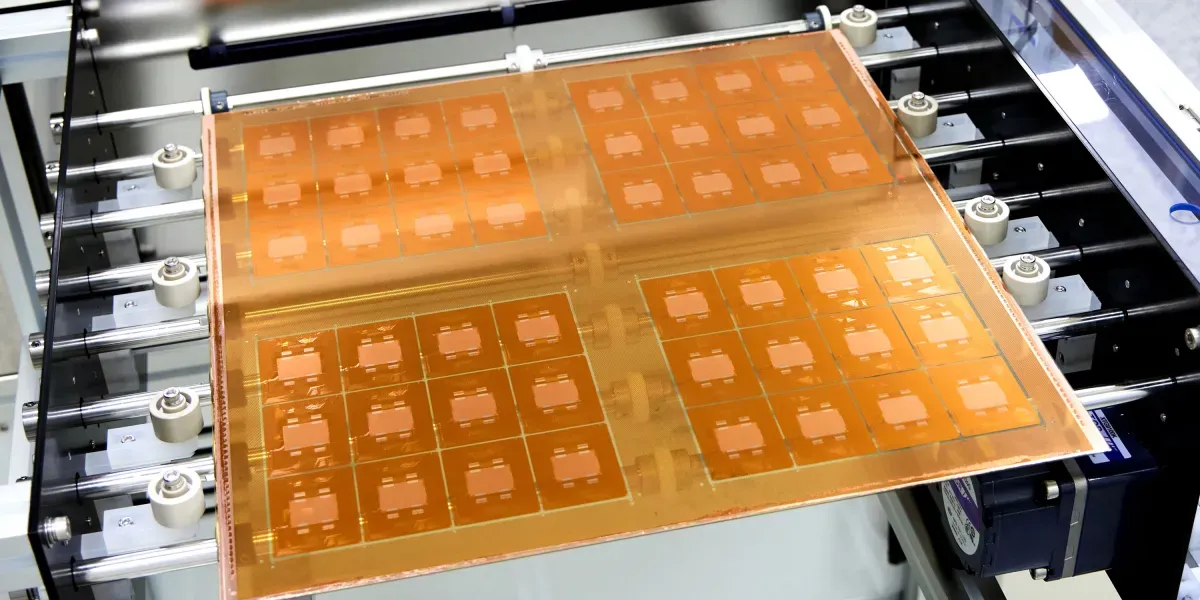

The platform uses seven chips for computing, networking, and storage. These power what Nvidia calls "AI factories." These are large facilities that create the huge amounts of AI tokens needed for modern models.

Huang described Vera Rubin as a "generational leap." He said it includes seven breakthrough chips, five racks, and forms one giant supercomputer. He believes it will power every stage of AI. Huang also stated that the "agentic AI inflection point has arrived," with Vera Rubin starting the biggest infrastructure buildout in history.

A key setup, called Vera Rubin NVL72, combines 72 GPUs and 36 Vera CPUs into one rack system. These are connected by Nvidia's NVLink technology. Nvidia says this system can offer up to four times better AI training performance. It can also provide up to 10 times higher inference efficiency compared to older GPU platforms.

Nvidia is also taking its AI platform beyond Earth. It is expanding into space computing systems for satellites and orbital data centers. Huang said, "Space computing, the final frontier, has arrived." He added that AI processing across space and ground systems allows for real-time sensing, decision-making, and autonomy.

Nvidia expects Vera CPU-based systems and Vera Rubin infrastructure to start shipping through hardware partners in the second half of this year.