Researchers in Germany have created a search robot that can find lost items in homes. It uses language models and 3D mapping to track objects.

Scientists at the Technical University of Munich (TUM) developed this robot. They describe it as "a broomstick on wheels" with a camera on top. It's one of the first robots to use image understanding for a specific task.

How the Robot Works

The robot was designed at TUM’s Learning Systems and Robotics Lab. It can find lost items when given a command. It combines information from the internet with a spatial map of its surroundings. This helps it efficiently locate requested objects.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxAngela Schoellig, a professor for robotics and AI at TUM, explained that the robot needs to scan its surroundings first. It builds a 3D map of the room to find items, like glasses misplaced in the kitchen.

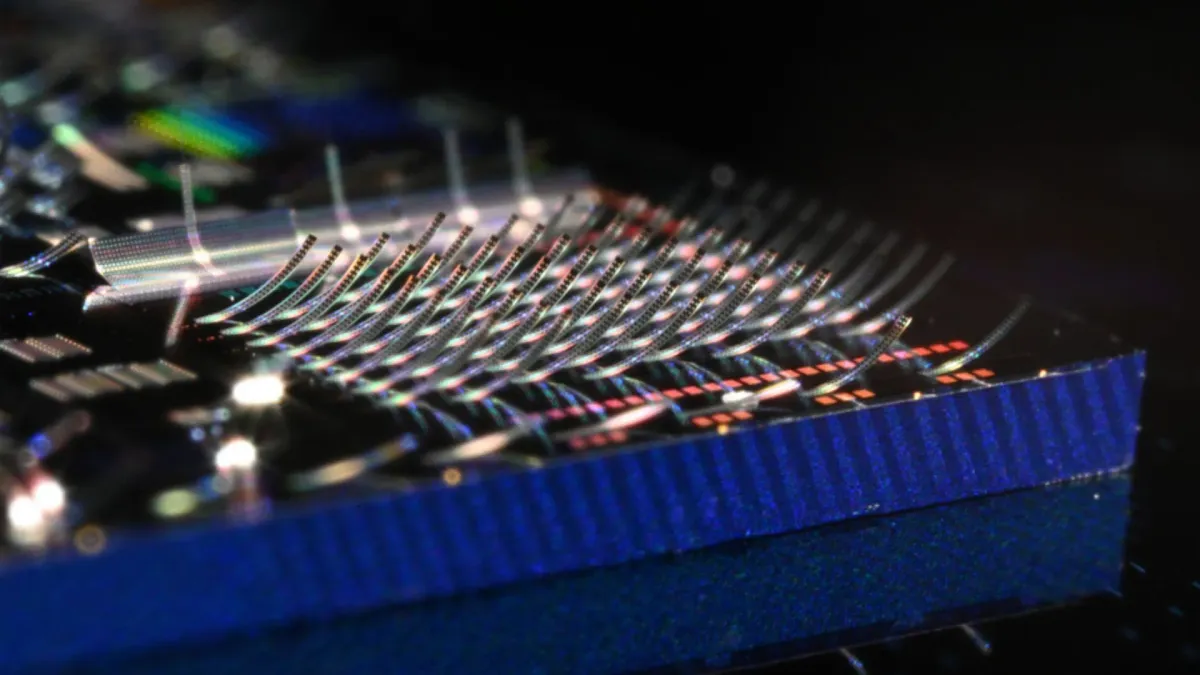

The camera records standard 2D images, but these images also include depth data. The robot uses this data to build a constantly updated spatial map that is accurate to within centimeters.

At the same time, a laptop provides input to the system. This input identifies objects in the image and determines how relevant they are to humans.

Schoellig noted that this level of understanding is important for robots in factories and for care robots in homes. She added that it's crucial for robots that move in spaces that are always changing. For example, the robot can tell that a table or windowsill is a good place for glasses, but a stovetop or sink is not.

Smarter Object Tracking

The new system uses AI in two ways. First, computer vision algorithms find items and surfaces in the environment. Second, a language model interprets how these objects relate to each other. Then, it turns this data into instructions the robot can use to navigate.

Schoellig explained that the language model understands object relationships, and this information is converted into the robot's language. The 3D map shows numbers that constantly update the likelihood of finding the missing object in each spot.

Tests showed the robot can check likely spots about 30% more efficiently than a random search. The robot also has memory. It keeps track of old images and compares them with new visual data.

If the robot sees a new item in the kitchen, it identifies the change with about 95% accuracy. It then marks that spot as very likely to contain the missing item.

Currently, the robot finds objects in open spaces. The research team is working on an upgrade to allow it to search inside drawers, cupboards, and other closed areas.

Deep Dive & References: Search Robot Thinks for Itself - IEEE Robotics and Automation Letters