Artificial intelligence is quietly entering the rooms where nations make decisions about each other. And security researchers are watching closely — not because AI itself is inherently dangerous, but because the moment a government starts relying on an AI system to advise on foreign policy, that system becomes a target.

"As soon as you put any AI system in a position of power, where it is making a recommendation to a government, that means it will be hacked," says Bruce Schneier, a computer security expert and lecturer at Harvard Kennedy School. It's not a question of if, but when. Governments have spent decades hacking each other's electrical grids and databases. AI systems that shape diplomatic decisions would be far more valuable prizes.

The appeal is real. Ofrit Liviatan, a lecturer in Harvard's Department of Government, sees genuine potential. Large language models can speed up law formation, crunch through data at scale, expose weaknesses in existing legislation, and flag compliance gaps that human analysts might miss. For policymakers drowning in information, the efficiency gains are tempting.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxBut efficiency and security are often at odds. Carmem Domingues, a former AI policy adviser to the White House, points to another vulnerability: foreign adversaries could buy advertising space from domestic tech companies and use it to subtly influence how large language models respond to questions about sensitive topics. The propaganda doesn't need to be obvious. It just needs to be persistent.

The regulation gap

Right now, the world is scrambling to catch up. The European Union's AI Act is the first major attempt to set guardrails — restrictions on misinformation, surveillance, and cyberattacks baked into the rules themselves. But even this early framework is already under fire from those worried it will slow innovation.

Liviatan argues that global cooperation is essential. No single country can regulate AI in isolation; the technology moves faster than any one government's oversight. Yet that cooperation is precisely what's hard to achieve when nations see AI as a strategic advantage.

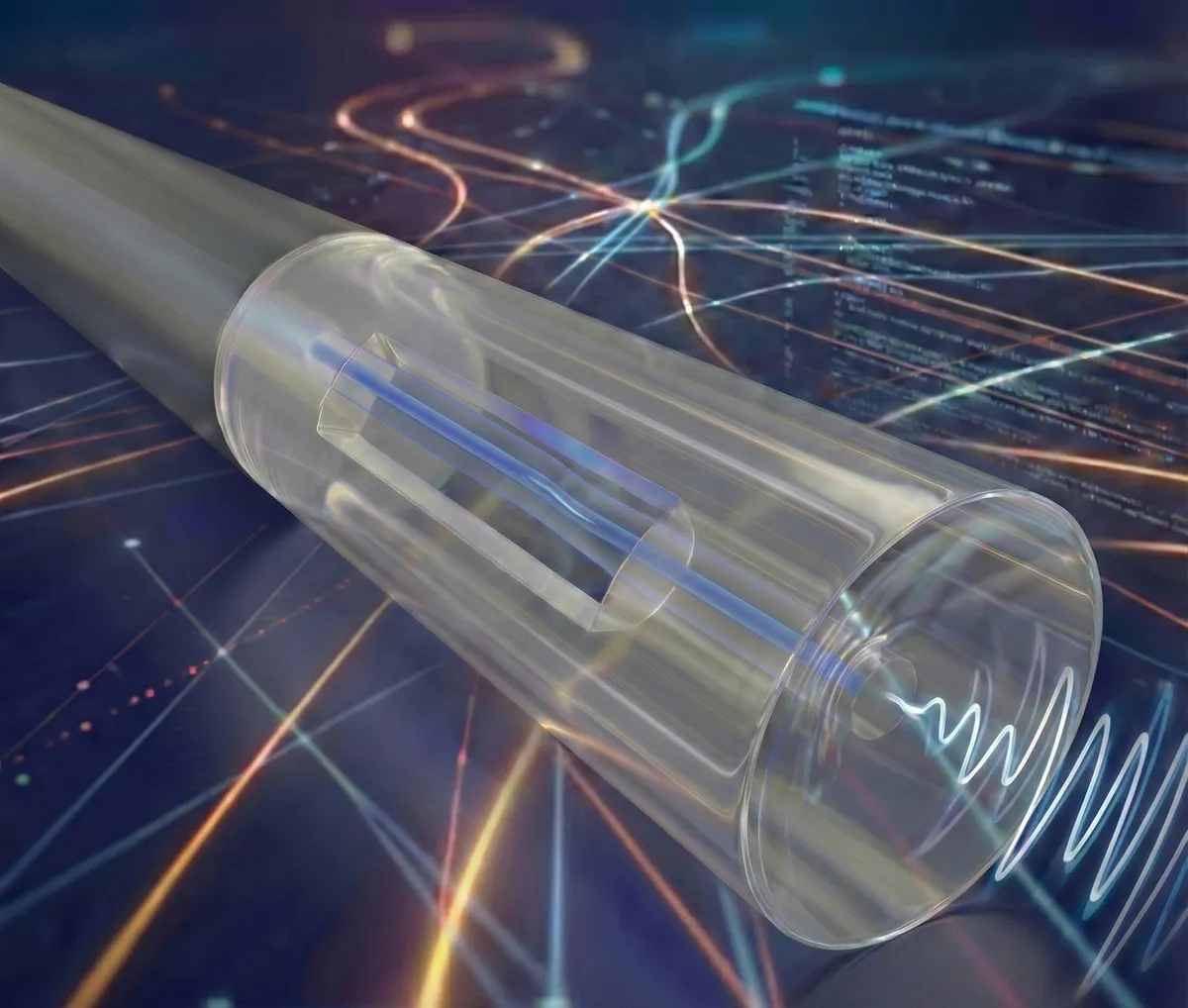

Schneier has a practical suggestion: governments might need to build their own AI systems, designed under different principles than the profit-driven models that dominate the private sector. These government systems could be reserved for sensitive negotiations and classified decisions — kept separate from the internet, hardened against infiltration. Switzerland's National Computing Centre already operates Apertuse, a system designed with exactly this kind of institutional independence in mind.

The stakes are high, but not hopeless. The experts agree on one thing: the decisions made in the next few years about how AI enters government will shape whether this technology strengthens international order or undermines it. That's not a reason to avoid AI in diplomacy. It's a reason to build it carefully.