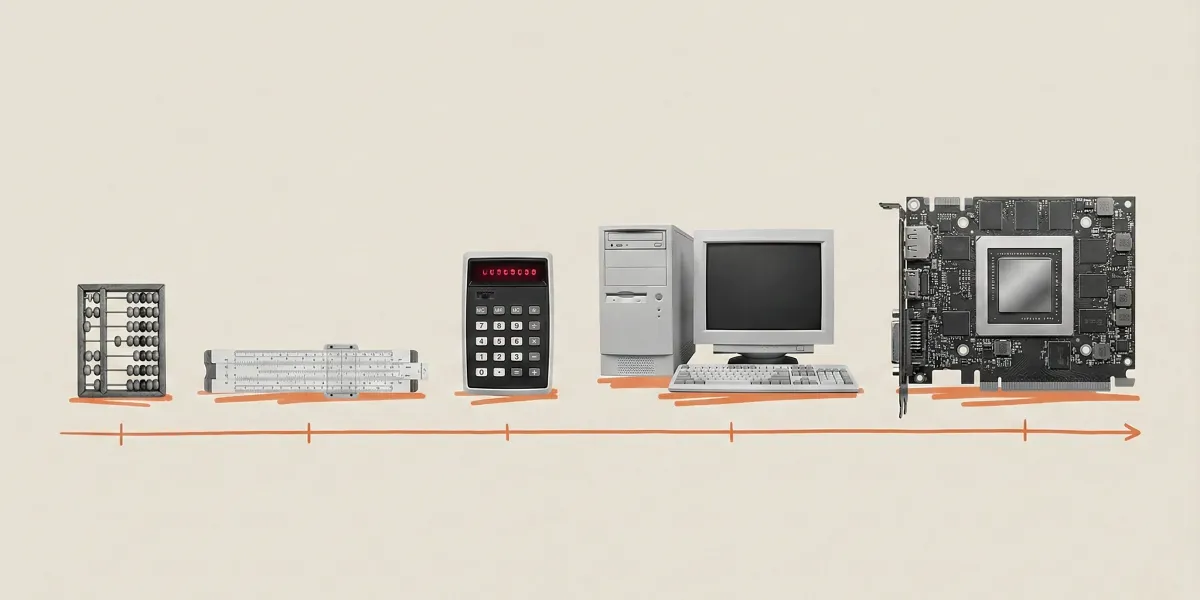

For most of human history, if you walked an hour, you covered a certain distance. Walk two hours, you covered twice that distance. Simple, right? Linear. Predictable. It's how our brains are wired. Which is why our brains are currently struggling to comprehend what's happening with AI.

Since 2010, the amount of data used to train advanced AI models has exploded by a trillion times. Early systems chugged along at 10¹⁴ flops (a flop, for the uninitiated, is a floating-point operation per second — basically, a calculation). Today's biggest models? Over 10²⁶ flops. That's not just growth; it's a computational Big Bang, and it's the engine behind every other AI leap.

Why the Boom Won't Bust

Some folks predict AI will hit a wall. They point to things like Moore's Law, which states computing power doubles every couple of years, but is rumored to be slowing. They fret about data shortages or energy limits. Which, if you look at it in a straight line, makes sense. But AI isn't playing by linear rules.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxImagine AI training as a massive room, packed with people using calculators. Back in the day, adding more computing power meant adding more people, many of whom sat idle. The revolution now? It's not just better calculators. It's making sure every single calculator is firing on all cylinders, all the time, in perfect sync.

Three major advancements are making this possible, turning those idle calculator-wielders into a hyper-efficient superbrain:

- Faster Calculators: Chips from the likes of Nvidia have gotten ludicrously fast. We're talking more than seven times faster in just six years. New chips like Maia 200 are making performance-per-dollar ratios look like a typo.

- Faster Data Delivery: High Bandwidth Memory (HBM) technology stacks chips like tiny, data-guzzling skyscrapers. The latest HBM3 triples data delivery speed, meaning processors are never left twiddling their digital thumbs.

- Massive Computing Networks: Technologies like NVLink and InfiniBand are linking hundreds of thousands of GPUs (graphics processing units) into colossal supercomputers. These aren't just collections of machines; they're acting as single, unified thinking entities. A few years ago, this was pure sci-fi.

These improvements aren't just additive; they're multiplicative. Training a language model that took 167 minutes on eight GPUs in 2020 now takes less than four minutes on similar modern hardware. That's a 50x improvement. Moore's Law would have predicted a mere 5x. Let that sink in.

The Software Surge and What's Next

Software isn't just keeping up; it's practically setting new land speed records. The computing power needed to hit a specific performance level in AI is halving roughly every eight months. That's twice as fast as Moore's Law's 18-24 month prediction. And the cost of running some new AI models? It's plummeted by up to 900% annually. Imagine that for your grocery bill.

The future numbers are equally eye-popping. Leading AI labs are nearly quadrupling their computing capacity every year. Since 2020, the power used for advanced models has grown five times annually. By 2027, global AI computing power is expected to increase tenfold in just three years. That could mean another 1,000 times more effective computing power by the end of 2028. By 2030, we might be adding 200 gigawatts of computing power online every year — the peak energy use of the UK, France, Germany, and Italy combined. Because apparently, that's where we are now.

This explosive growth isn't just about better chatbots. It's about AI systems achieving near-human capabilities. Think "agents" that can write code for days, manage projects for months, make calls, negotiate contracts, and handle logistics. Not just basic assistants, but entire teams of AI workers that think, collaborate, and get things done. This isn't theoretical; the shift is already underway, ready to reshape every industry that involves, well, thinking.

Energy is the elephant in the server room, no doubt. One AI rack, roughly the size of a refrigerator, can suck down as much power as 100 homes. But here's the kicker: the cost of solar power has fallen nearly 100 times over 50 years, and battery prices have dropped 97% in three decades. There's a clear path to scaling AI with clean energy. The money's flowing, the engineers are delivering, and warehouse-sized supercomputers powered by the sun aren't science fiction. They're being built right now, all over the world.

Those expecting linear growth are going to keep being surprised. The explosion in computing power isn't just a story; it's arguably the technology story of our time. And it's only just getting started.