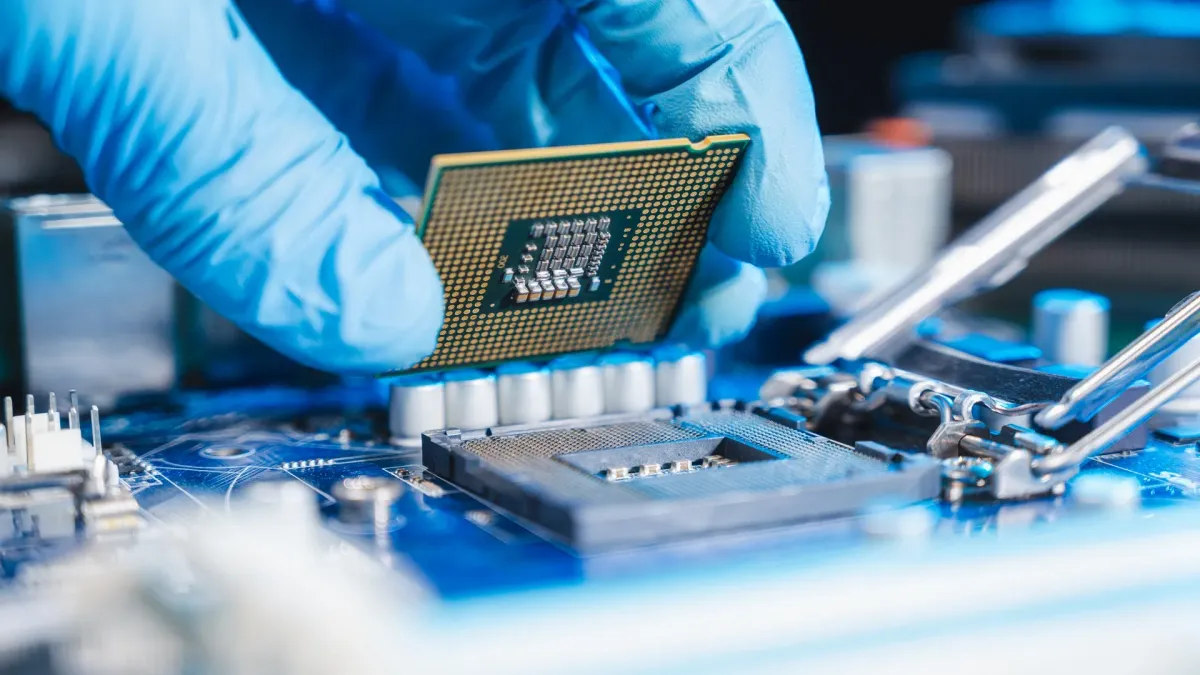

Researchers at the University of Sydney just built something that sounds like science fiction: a computer chip that thinks with light instead of electricity.

The nanophotonic chip performs AI calculations as photons travel through tiny structures—operations happening in picoseconds, or trillionths of a second. No electrons bouncing through wires. No heat buildup demanding massive cooling systems. Just light doing the math.

This matters because AI is drowning in electricity. Data centers running large language models consume staggering amounts of power, and most of that goes to cooling. Traditional silicon chips move electrically charged particles through circuits, which creates resistance, which creates heat, which requires energy-intensive cooling systems that spiral into more energy use. It's a vicious cycle.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxThe photonic approach breaks it. Instead of routing electrons through wires, the chip guides photons through nanostructures only tens of micrometers wide—about the thickness of a human hair. As light passes through, the structures themselves perform the calculations. No separate processing step. No resistance. Minimal heat.

How It Actually Works

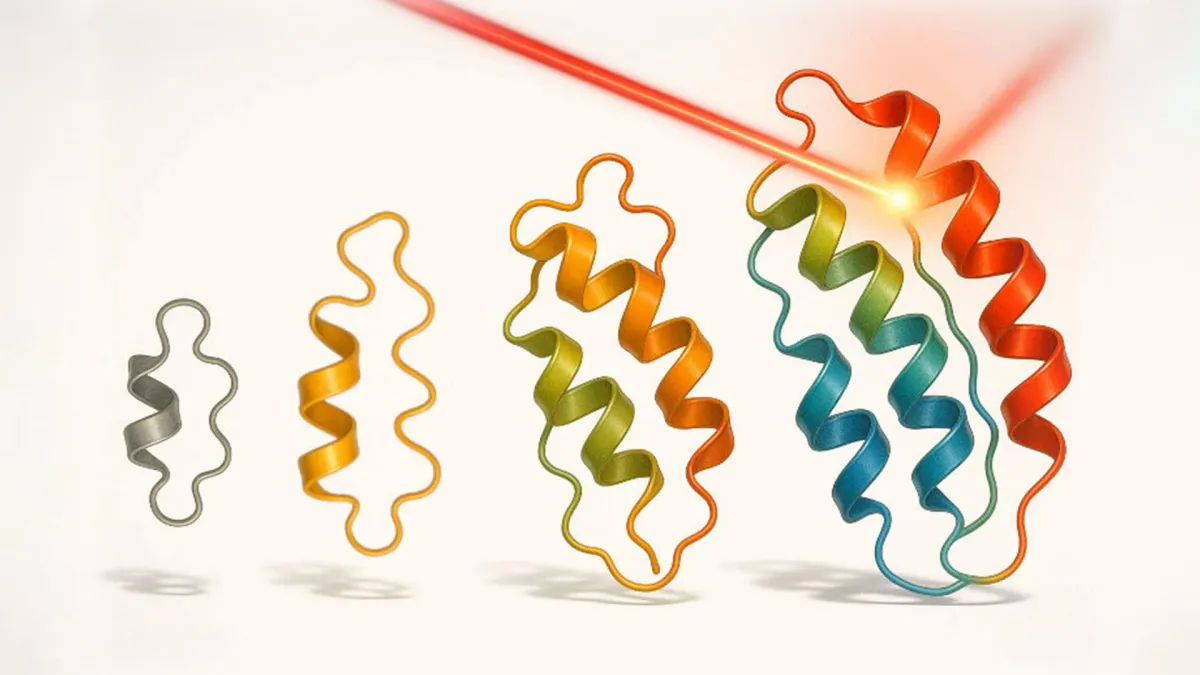

The chip's architecture mimics how your brain processes information. The physical layout of the nanostructures acts as artificial neurons. Pattern recognition and classification happen as light travels across the device. It's neural computation embedded directly into the hardware, not running as software on top of it.

To test the prototype, the team trained it on over 10,000 medical images—MRI scans of the breast, chest, and abdomen. The photonic neural network identified images with 90 to 99 percent accuracy. Each calculation took place on the picosecond timescale. That's not just fast. That's operating at the speed of light, literally.

"Artificial intelligence is increasingly constrained by energy consumption," says Professor Xiaoke Yi, who leads the Photonics Research Group. "This research performs neural computation using light, enabling faster, more energy-efficient and ultra-compact AI accelerators."

The team has spent over a decade exploring photonics for computing. This prototype is the proof of concept. Next comes scaling—building larger photonic neural networks that can handle more complex datasets.

If this technology scales, photonic chips could eventually complement or replace traditional processors for certain AI workloads. The payoff isn't just speed. It's a fundamental reduction in the electricity and cooling demands that currently make AI infrastructure so resource-intensive. Light travels through materials without electrical resistance, so heat generation drops dramatically compared to electronic chips.

We're still in prototype territory, but the direction is clear: computing infrastructure that runs cooler, faster, and on far less power. That's the kind of progress that actually changes what's possible.