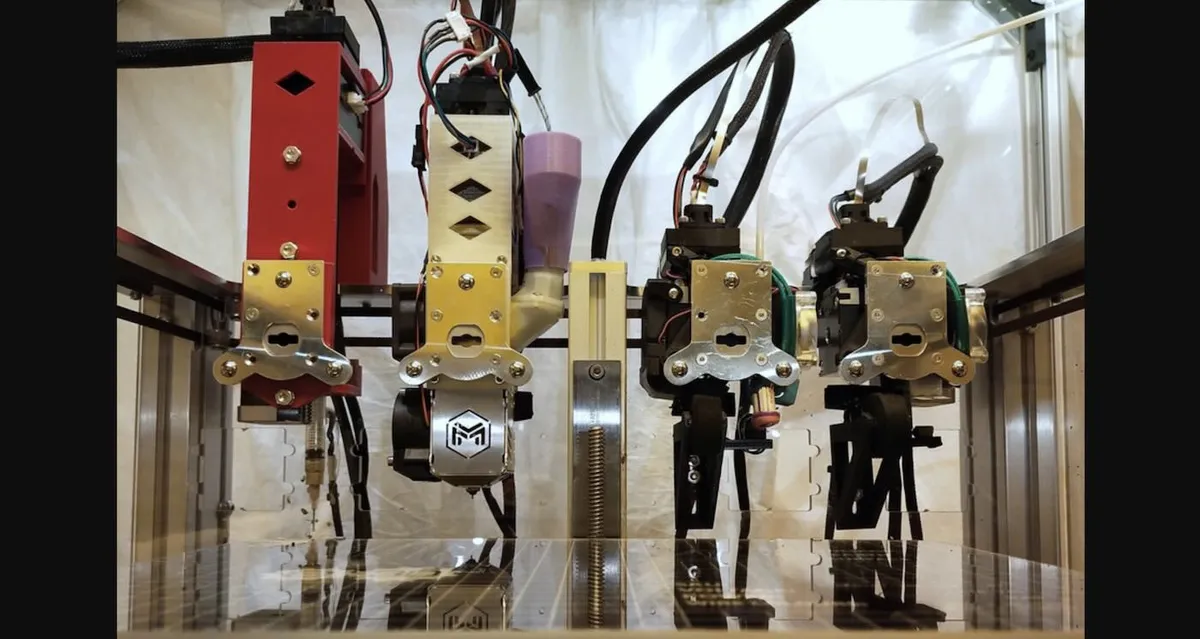

Every time you take a photo on your phone, you're using technology that NASA scientists invented to see deeper into space. The CMOS image sensor—the tiny chip behind virtually every smartphone camera, car safety system, and medical device on Earth—began as a solution to a specific problem: how to capture images from Mars rovers and deep-space telescopes without draining power or breaking under radiation.

In 1990, engineer Eric Fossum arrived at NASA's Jet Propulsion Laboratory tasked with improving the camera technology that had made the Hubble Space Telescope famous. The existing system, called CCD sensors, worked brilliantly for science but demanded enormous power and complex engineering. Fossum didn't just improve it. He invented something fundamentally different.

His insight was elegant: instead of moving electrical signals across the entire chip to a central processor, what if each pixel could amplify its own signal right where it sat. This CMOS approach meant lower power consumption, better radiation resistance, and the potential to be manufactured cheaply. The problem was noise—early versions produced too much signal degradation for serious scientific work.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxFossum solved it by borrowing a technique from the old CCD design: measuring each pixel's voltage twice (once in darkness, once with light) and subtracting the difference to cancel out interference. It worked. By 1995, he and colleague Sabrina Kemeny licensed the technology, founded a company called Photobit, and began refining it for the real world.

From lab to everywhere

When Micron Technology acquired Photobit in 2001 and invested heavily in manufacturing, the timing proved perfect. Smartphones were about to explode into existence, and they needed cameras. CMOS sensors appeared first in webcams and then in those tiny "pill cameras" that doctors swallow to photograph the digestive tract. By 2013, manufacturers were producing over one billion sensors annually. Today it's seven billion per year—roughly one for every person on Earth.

The technology is now woven into the fabric of daily life in ways most people never think about. It's in your car's backup camera and collision detection system. It's in security cameras, sports footage, professional cinema, and the medical imaging that helps doctors diagnose disease. It's in the devices NASA still uses to explore Mars, monitor the Sun, and search for signs of life on distant moons.

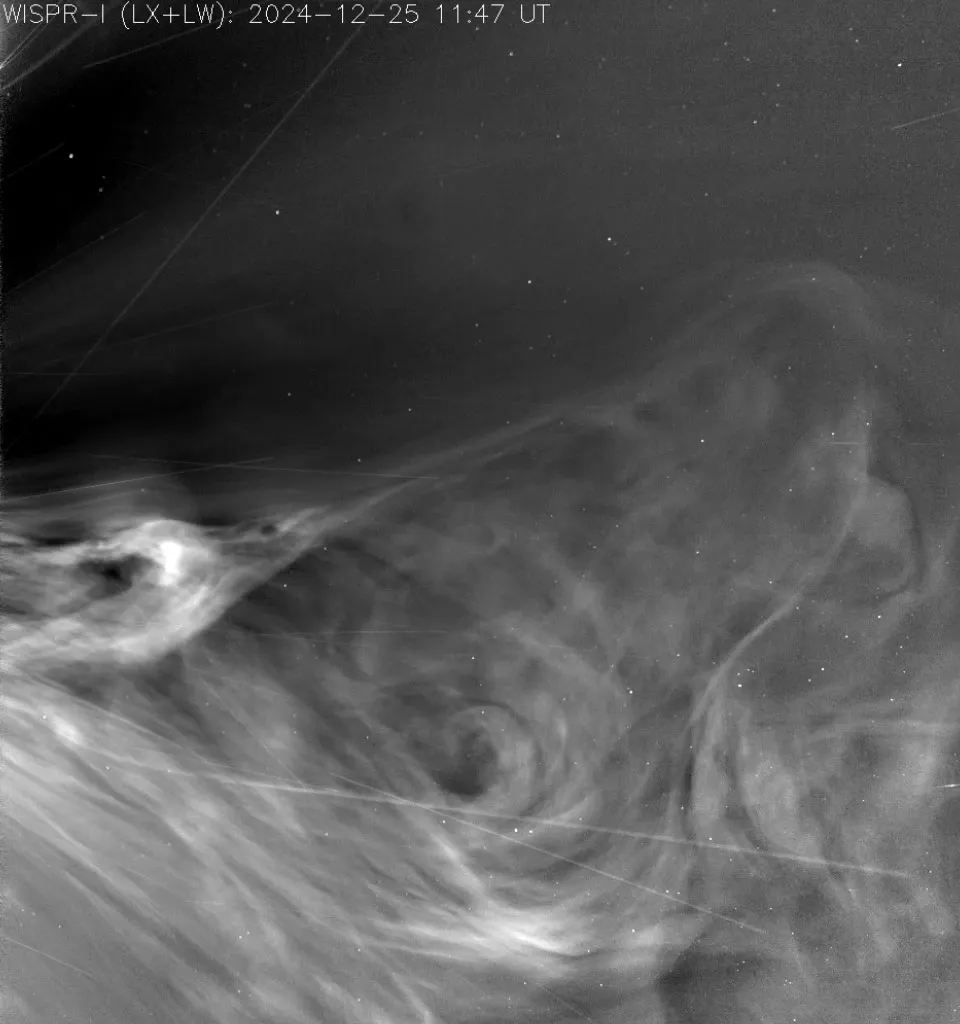

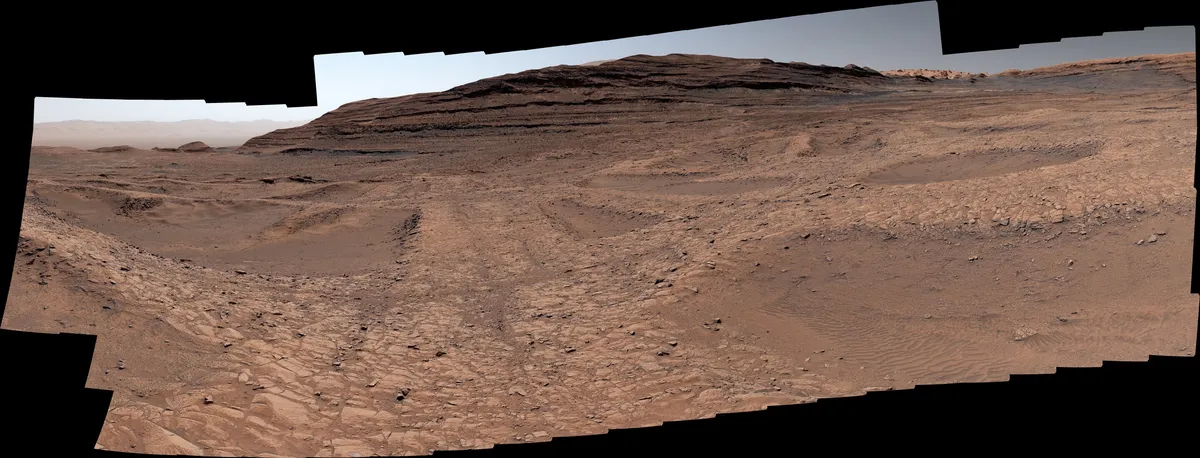

The Perseverance rover landing on Mars relied on CMOS imagers to navigate safely. The Parker Solar Probe uses them to study the Sun's corona. Europa Clipper, heading to Jupiter's icy moon, carries CMOS cameras designed to look for conditions that might support life. Smaller missions like Pandora (studying exoplanet atmospheres) and BLACKCAT (an X-ray telescope) depend on descendants of Fossum's original innovation.

In 2026, the National Academy of Engineering will award Fossum the Charles Stark Draper Prize—one of engineering's highest honors—for his work. The citation recognizes engineers whose innovations significantly improve quality of life and expand access to information. Few technologies have done both as thoroughly as the camera chip that started as a way to see farther into space and ended up in nearly every pocket on Earth.