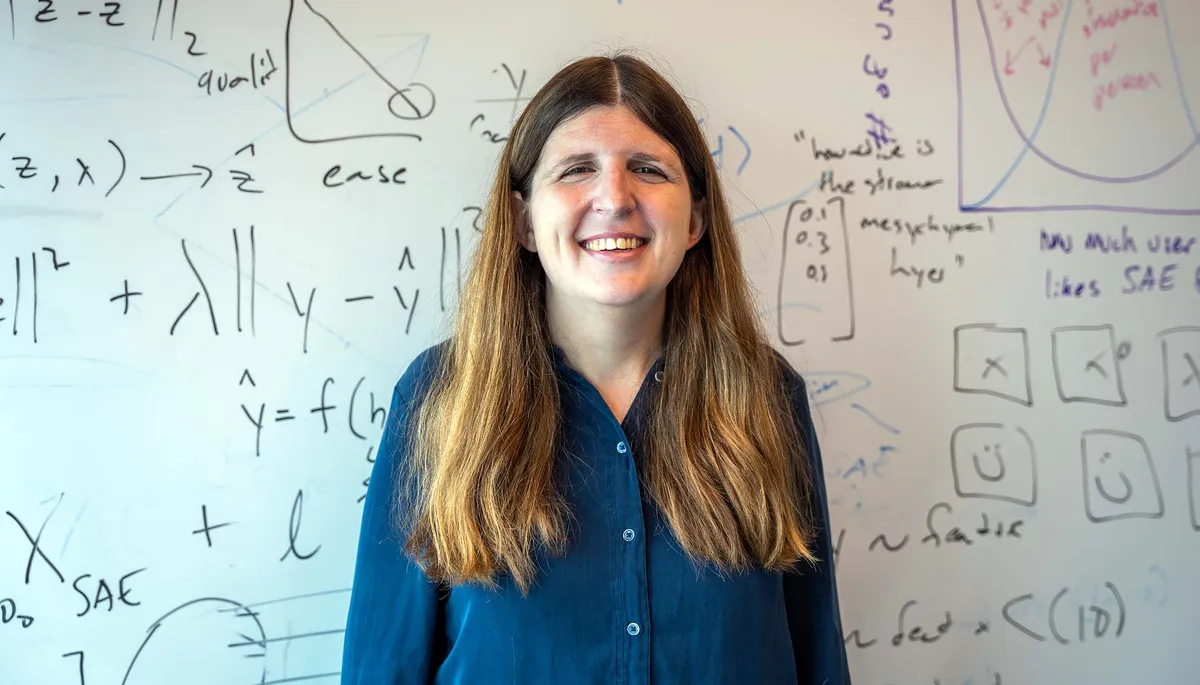

Emma Pierson was studying distant galaxies when a genetic test changed her direction. A BRCA1 mutation meant high risk for breast and ovarian cancers—the kind of diagnosis that makes abstract research feel suddenly personal. She switched from physics to computer science, but kept the same question: how do you use data to understand complex systems?

Now, as an assistant professor at UC Berkeley, she's tackling one of AI's most stubborn problems: algorithms that inherit human bias instead of correcting it. The challenge isn't theoretical. It's about whether a person gets screened for cancer, whether a driver gets searched at a traffic stop, whether someone's risk gets calculated fairly.

The Data Tells a Story

Early in her graduate work at Stanford, Pierson started analyzing traffic stop data from the Stanford Open Policing Project. The numbers were stark: drivers perceived as Hispanic were significantly more likely to be searched and arrested than those perceived as white, even when controlling for other factors. She and collaborator Nora Gera developed a new method to distinguish whether these disparities came from discrimination or other causes. The answer was clear enough to redirect her career.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxThe problem with AI bias is deceptively simple: algorithms trained on biased data make biased decisions. A healthcare algorithm built on historical data that reflects existing inequalities will perpetuate those inequalities. Many of these systems are proprietary—locked away in hospitals and courtrooms, impossible to audit.

But Pierson points to something counterintuitive: algorithmic bias is often easier to find and fix than human bias. When a human doctor makes a biased decision, it's buried in judgment and context. When an algorithm does it, you can trace the path, see where it went wrong, adjust the weights. The bias becomes visible.

The Harder Question

This doesn't mean the solutions are simple. Pierson's research revealed a paradox that many assumed wouldn't exist: removing race entirely from medical algorithms didn't reduce disparities. It actually made them worse. When she tested an algorithm that ignored race, it under-predicted cancer risk for Black patients.

Why? Because race is correlated with access to healthcare, screening history, and other factors that affect disease presentation. Pretending those disparities don't exist doesn't make them go away. It just makes the algorithm blind to them.

The real solution is messier: transparency. Pierson's research shows patients are often comfortable with race being used in medical algorithms—as long as doctors explain exactly how and why. The goal isn't a colorblind algorithm. It's one that accounts for the world as it actually is, not as we wish it were.

"I think it's fair to say that in a world where we had access to all data for all people and everything was perfectly equitable, we would not need to use race in algorithms," Pierson said. "But it is also important to consider the world we are actually in and design algorithms that help us make the best decisions for patients today with the data we actually have."

Her work suggests the path forward isn't removing human context from medicine. It's making that context explicit, auditable, and fair. The algorithms that will actually reduce inequality are the ones that look directly at where inequality exists—and then work to correct it.