For nearly 60 years, the Luna 9 spacecraft has been lost somewhere on the moon. It shouldn't be—it was the first human-made object to land there, back in February 1966. But the Soviets' soft landing technique, combined with outdated calculations and decades of uncertainty, meant that no one could pinpoint exactly where it came to rest.

Now, an international team of researchers using artificial intelligence may finally be closing in. They've trained a machine learning algorithm on thousands of lunar images, teaching it to recognize the subtle surface disturbances that a spacecraft leaves behind. The algorithm—called YOLO-ETA, short for You-Only-Look-Once Extraterrestrial Artifact—has narrowed the search area down to a handful of promising locations. Their findings were published recently in npj Space Exploration.

A 60-year-old mystery

The Luna 9 story is one of Cold War drama and technical ingenuity. While the United States eventually won the race to land humans on the moon, the Soviets got there first with a robotic spacecraft. Luna 9 bounced across the lunar surface on inflatable shock absorbers—a clever design that let it survive the impact—and sent back the first photographs from another world. It was a genuine achievement, the kind that should have been easy to study and learn from for generations.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxBut then it vanished, at least in any practical sense. The Soviets published their estimated landing coordinates in Pravda, but the coordinates were wrong. When NASA's Lunar Reconnaissance Orbiter began mapping the moon in high detail in 2009, it confirmed what researchers had suspected: Luna 9 wasn't where it was supposed to be. It might be miles away.

Training a machine to spot the invisible

Lewis Pinault, a data scientist at University College London, decided to approach the problem differently. Instead of manually searching through thousands of images, he built an algorithm that could learn what a lunar lander actually looks like from above. He trained YOLO-ETA on imagery of Apollo landing sites—places where we know exactly what the lander touched down and what it left behind. The algorithm learned to spot the subtle signs: disturbed soil, shadows cast by equipment, the faint marks of descent thrusters.

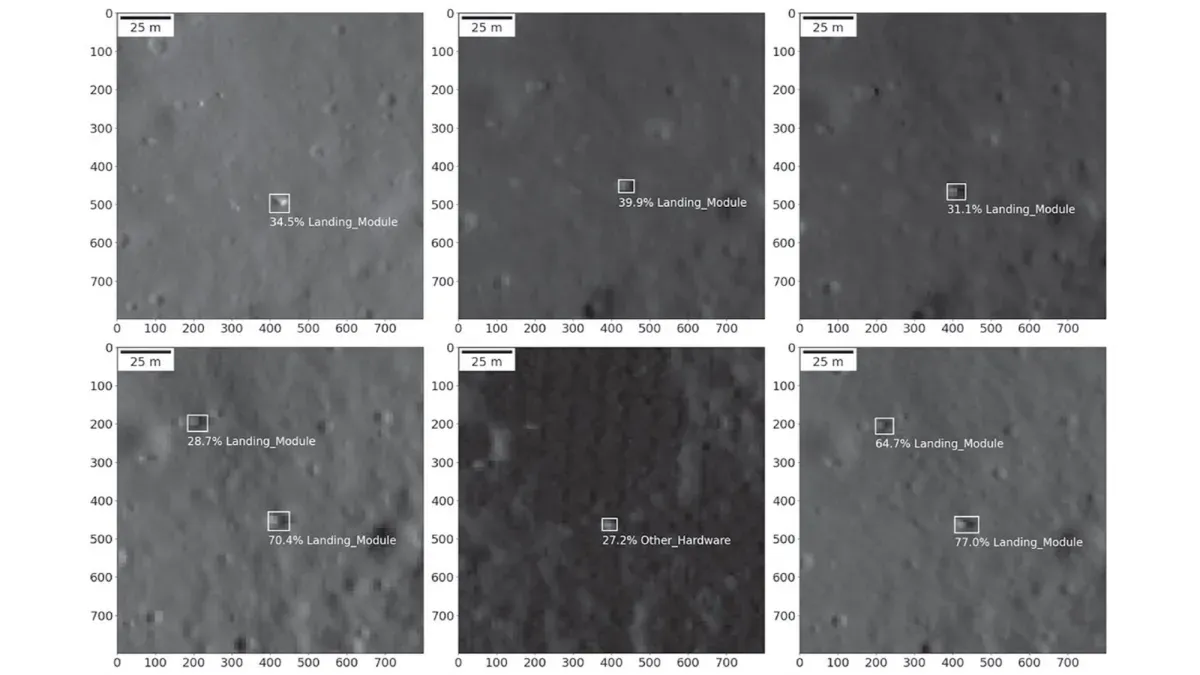

When researchers tested it on unfamiliar images, including photos of the Luna 16 spacecraft that landed in 1970, the algorithm performed with striking accuracy. It could identify lander signatures that would be nearly invisible to a human eye scanning through thousands of square miles of crater-marked terrain.

Then came the real test. The team asked YOLO-ETA to scan the roughly 3.1-by-3.1 mile search area where Luna 9 was supposed to be. The algorithm returned multiple candidate locations, each showing potential signs of artificial disturbance on the lunar surface.

The waiting won't stretch another 60 years. India's Chandrayaan-2 orbiter is scheduled to pass over the area in March 2026 as part of its ongoing mapping mission. When it does, researchers will have high-definition imagery to compare against the algorithm's predictions. One of those candidate locations is likely to be Luna 9—finally answering a question that's been hanging in the lunar dust for decades.