Artificial intelligence is getting smarter, more pervasive, and, let's be honest, a lot hungrier. We're talking about an energy appetite so vast that AI data centers are expected to double their power consumption by 2030. Which, if you think about it, is both impressive and slightly terrifying.

Meanwhile, the human brain — that squishy, organic supercomputer sitting in your skull — chugs along on a mere 20 watts of power. That's roughly what an old-school incandescent light bulb used. While you're busy contemplating the universe, your brain is doing it on the energy equivalent of a nightlight. Modern computers? Not so much.

Why Your Brain is an Energy-Saving Genius

For decades, computers have kept their "thinking" (processing) and "remembering" (memory) functions separate. Data constantly shuffles back and forth between these two areas, like a librarian running between the reference desk and the archives for every single question. It's slow, and it guzzles power.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxThe brain, however, is far more elegant. Its neurons connect via synapses, which simultaneously handle both memory and processing. It's like the librarian is the archive, instantly recalling and connecting information without ever leaving their seat. This allows the brain to learn, adapt, and operate with astonishing efficiency.

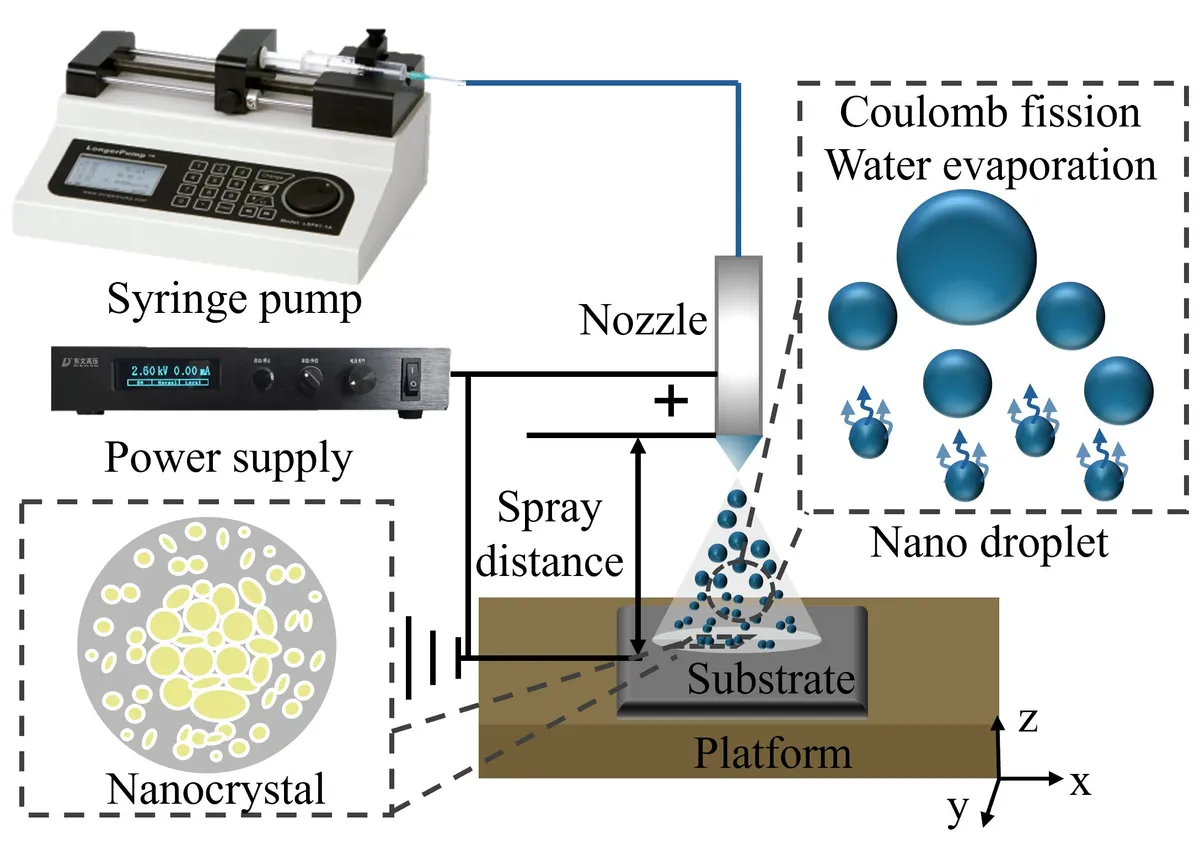

Suchi Guha, a physics professor at the University of Missouri, and his team are trying to mimic this biological brilliance with something called "neuromorphic computing." They're not just trying to make transistors faster; they're trying to make them smarter.

They're building organic transistors that can store and process information in the same spot, just like your brain's synapses. It's a fundamental shift from the traditional computer architecture that's served us well for decades but is now hitting its limits.

The Secret Sauce: Where Materials Meet

The researchers didn't just throw some organic compounds at the problem. They meticulously tested different materials for their synaptic transistors, and what they found was fascinating. Even materials that looked similar on paper performed wildly differently.

The key, it turns out, was the "interface" — that incredibly thin boundary where the semiconductor meets an insulating layer within the device. It's not just about what a material is made of; it's about how it interacts with its surroundings. Subtle structural differences at this microscopic handshake point can have a massive impact on performance.

This research is a crucial step for other scientists building the next generation of neuromorphic hardware. Imagine AI that doesn't just learn, but learns efficiently, using a fraction of the power. AI that's better at pattern recognition because it's wired more like a human. Brain-inspired computing is still in its early days, but these advances are steadily bridging the gap between biology and machines. Because when it comes to efficient computing, the original model — the one inside your head — is still the undisputed champion.