A biocomputer made from human neurons just crossed a threshold that matters more than it sounds: it learned to play Doom, the 1993 shooter that's become tech's unofficial test of "can this thing actually compute?"

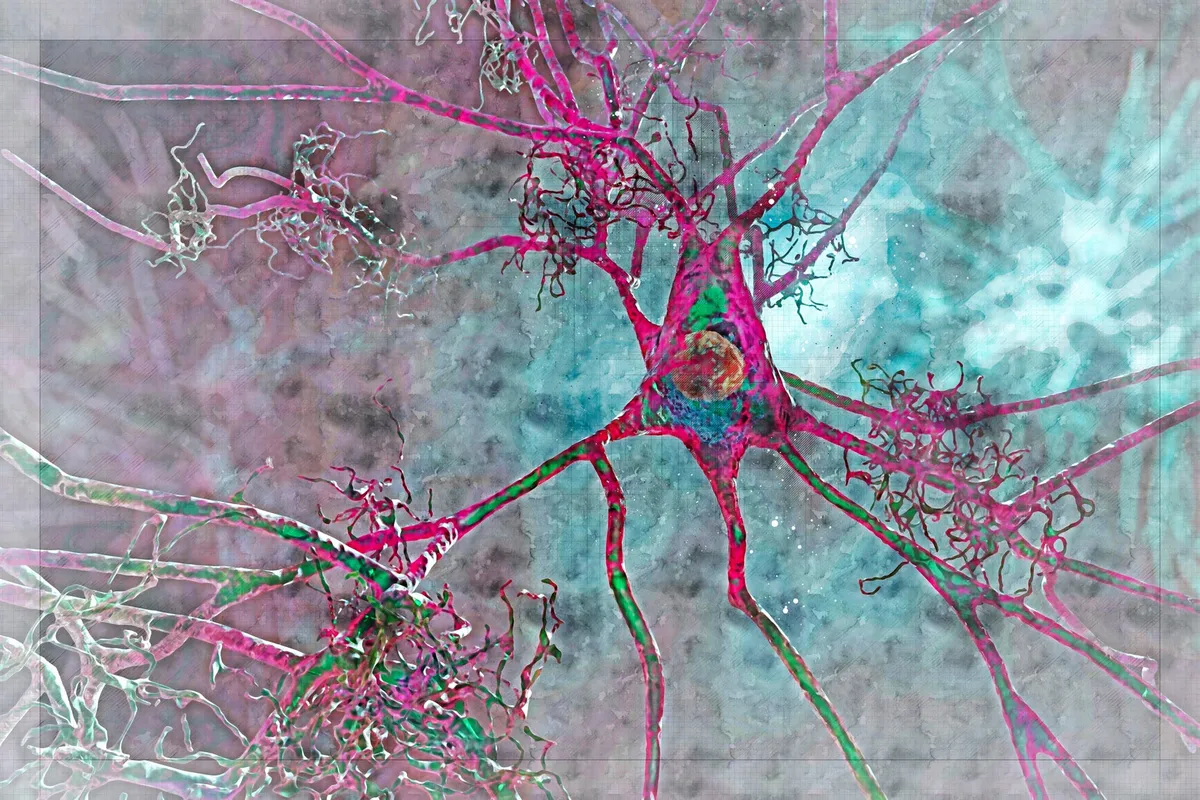

The system, called CL1, was built by Cortical Labs in Australia using around 800,000 human brain cells grown in a lab and wired to a processing chip. It can't beat the game—it loses plenty—but it learned to aim, dodge, and improve in real time. That's the part researchers find significant. "This demonstrated adaptive, real-time goal-directed learning," Brett Kagan, the lab's chief scientific officer, said in announcing the result.

Why Doom Matters (and Why It Doesn't)

The journey here started in 2021 when Cortical Labs got their first biocomputer, called DishBrain, to play Pong. That took 18 months. Getting from Pong to Doom might sound like a small step—it's the same leap gamers made 30 years ago—but it represents something genuinely different: the system had to learn to interpret visual information it had never seen before, convert that into electrical patterns the neurons could understand, and then respond in real time.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

Start Your News DetoxHere's where it gets interesting: a developer named Sean Cole, someone with minimal experience in biological computing, solved the visual translation problem in about a week using a new Python-based interface Cortical Labs created. That speed matters. It suggests the barrier to working with biocomputers is dropping fast. You don't need a PhD in neuroscience to program neurons anymore.

The biocomputer's actual Doom performance is humble—it plays better than random firing, but loses frequently. Yet Cortical Labs reports it learned faster than silicon-based machine learning systems would have at the same task. That's the real signal. We're not watching a computer beat humans at games. We're watching biological tissue develop competence at something it had no evolutionary reason to understand.

This matters because the researchers aren't chasing high scores. They're building toward hybrid systems that could eventually control robotic limbs, process complex data, or run programs in ways that combine the efficiency of biological neurons with the precision of silicon. A brain cell uses about 100,000 times less energy than a transistor doing equivalent work. Scale that up, and the implications for power consumption alone become significant.

The gap between playing Doom and powering practical technology is still enormous. But each milestone—Pong, then Doom, then whatever comes next—isn't really about the games. It's proof that we can grow human neurons in a dish, teach them to solve novel problems, and do it fast enough that other researchers can build on the work. That's the learning curve that matters.

The next question isn't whether biocomputers will play harder games. It's what happens when they stop playing games altogether.