Scientists at the University of Connecticut have built an imaging system that does something cameras have never managed: it captures microscopic detail from a distance without a single lens in sight.

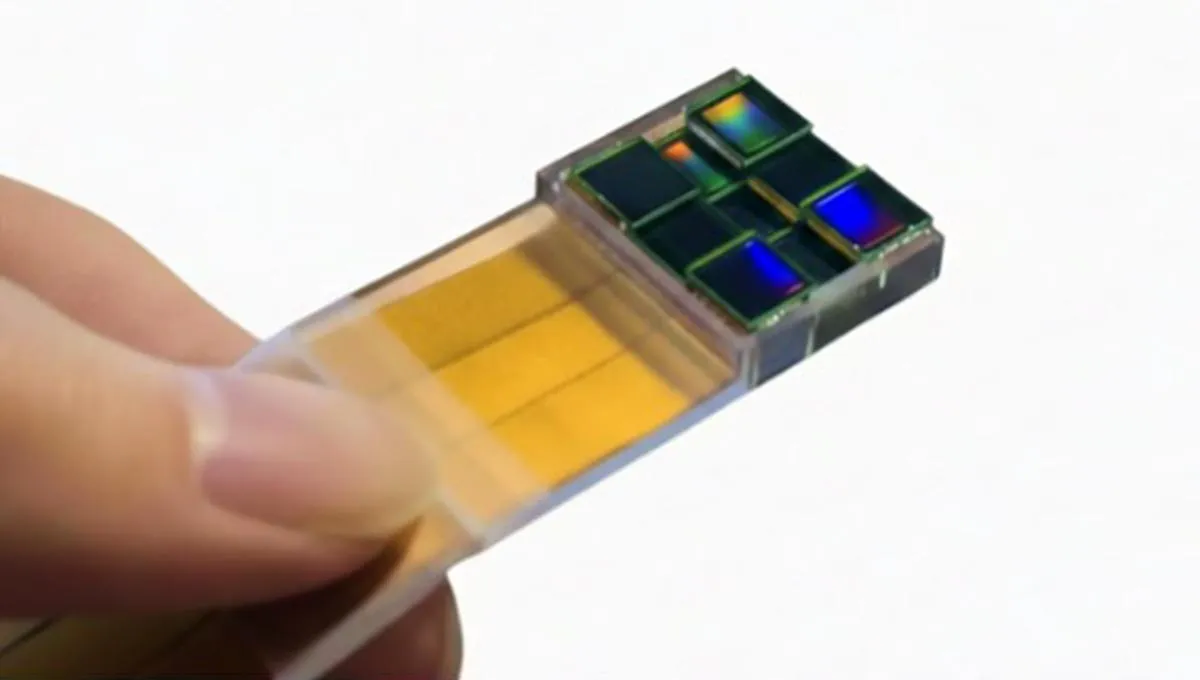

The breakthrough comes from a deceptively simple idea. Instead of using glass optics to focus light—the way every camera from your phone to the Hubble telescope works—Professor Guoan Zheng's team uses multiple tiny sensors spread across a small area, each collecting light independently. Then software does the heavy lifting, stitching those observations together after the fact to create an image with sub-micron resolution.

The system is called MASI, short for Multiscale Aperture Synthesis Imager, and it's inspired by the same principle that let astronomers photograph a black hole in 2019. That breakthrough used radio telescopes scattered across the globe, combining their signals computationally to simulate one impossibly large telescope. The problem: that trick has never worked well with visible light. Radio waves are long and forgiving; visible light operates at a scale where even tiny misalignments destroy the image. Keeping multiple optical sensors perfectly synchronized during measurement is technically brutal—it's why we've relied on lenses for centuries.

We're a new kind of news feed.

Regular news is designed to drain you. We're a non-profit built to restore you. Every story we publish is scored for impact, progress, and hope.

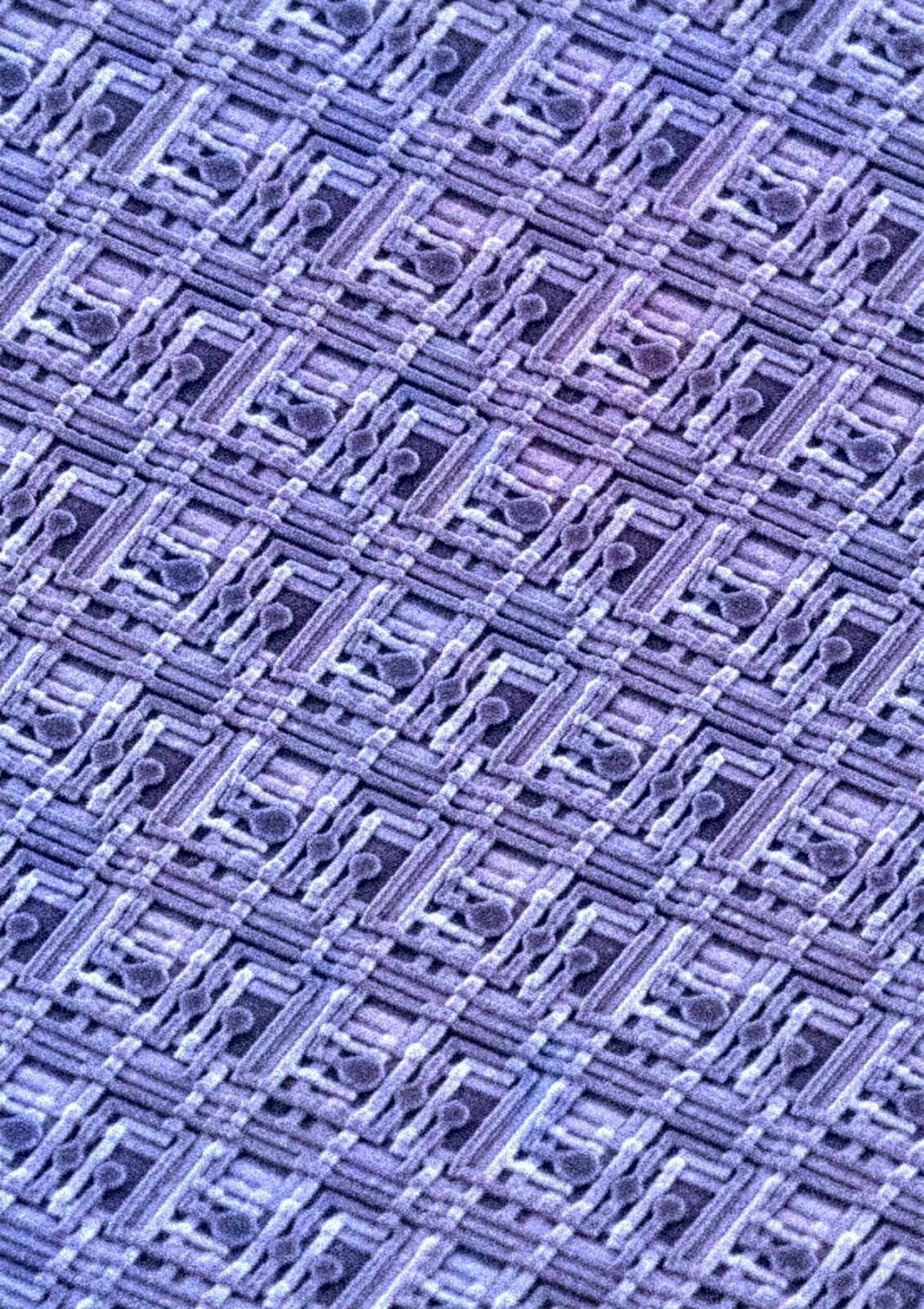

Start Your News DetoxMASI sidesteps the entire problem. Each sensor records diffraction patterns—essentially the fingerprint of how light spreads after bouncing off an object. These patterns contain all the information needed to reconstruct fine detail. The synchronization happens after, in the computer, where precision is cheap and unlimited.

"Instead of requiring sensors to remain perfectly synchronized during measurement, MASI allows each optical sensor to collect light on its own," Zheng explains. "Then computational algorithms align and synchronize the data after it has been captured." This separation of concerns—measure first, align later—removes the need for the rigid, expensive optical setups that have made traditional lens-free imaging impractical.

The result is a virtual aperture larger than any single sensor, achieving microscopic resolution while still capturing a wide field of view. Traditional lenses force engineers into a brutal trade-off: zoom in and you lose context; pull back and you lose detail. MASI doesn't have that constraint. It captures diffraction patterns from distances measured in centimeters and reconstructs images with detail measured in millionths of a meter.

What makes this genuinely significant is scalability. Conventional optics become exponentially more complex and expensive as they grow larger. MASI scales linearly—add more sensors, get a larger virtual aperture, no exponential cost creep. That opens doors to imaging arrays that would be impossible to build with traditional lenses.

The applications ripple across fields: forensic science could examine evidence at scales currently impossible; medical diagnostics could spot cellular-level detail in tissue samples; industrial inspection could catch manufacturing flaws before they matter; remote sensing could map terrain or detect changes from distance. But the real excitement, Zheng suggests, is that we probably haven't imagined the most important applications yet—they'll emerge once the technology is available.

This isn't a minor tweak to existing camera technology. It's a fundamental rethinking of how optical imaging works, replacing precision mechanical engineering with precision software. The shift mirrors a broader pattern in science: computation increasingly solves problems that seemed to require physical solutions.